USS sound waves are converted into electrical signals and then transformed into digital signals allowing them to be processed with Python’s GPIO library. An ultrasonic sensor is a sonar-enabled device that works by emitting sound waves and calculating distance based on the time it takes for the sound wave to hit an object and travel back to the sensor. Rgb_tensor = tf.expand_dims(rgb_tensor, 0)ĭue to their robustness, wide availability, and ultra-low cost, ultrasonic sensors (USSs) are the most commonly used proximity sensors amongst roboticists. Rgb_tensor = tf.convert_to_tensor(rgb, dtype=tf.uint8) Rgb = cv2.cvtColor(inp, cv2.COLOR_BGR2RGB) Inp = cv2.resize(image, (widget, height)) # Resize the image to match the input size of the model Import tensorflow_hub as hub import cv2 import numpy import pandas import tensorflow as tf import matplotlib.pyplot as plt For processing, we’ll represent the image in numerical format (similar to an array conversion).Because the model that we’ll use only accepts images of a specific size and color palette, we’ll load each image using OpenCV’s imread module and resize their dimensions to 1028 x 1028, and then convert each input image’s palette from RGB to BGR.Import the Python libraries required for image processing.Optionally, cameras can also be used to measure how far objects are from the robot, although roboticists may choose to use dedicated sensors for these kinds of secondary tasks.Deep learning enables the accurate classification of the objects detected by the camera’s input.Arrays are analyzed by OpenCV or scikit-image algorithms in order to recognize objects and analyze the scene in front of the robot in real time.Frames are converted into a multidimensional array using a library like NumPy.Cameras are used to capture either still images (photos) or a continuous stream of images (videos) from the environment.

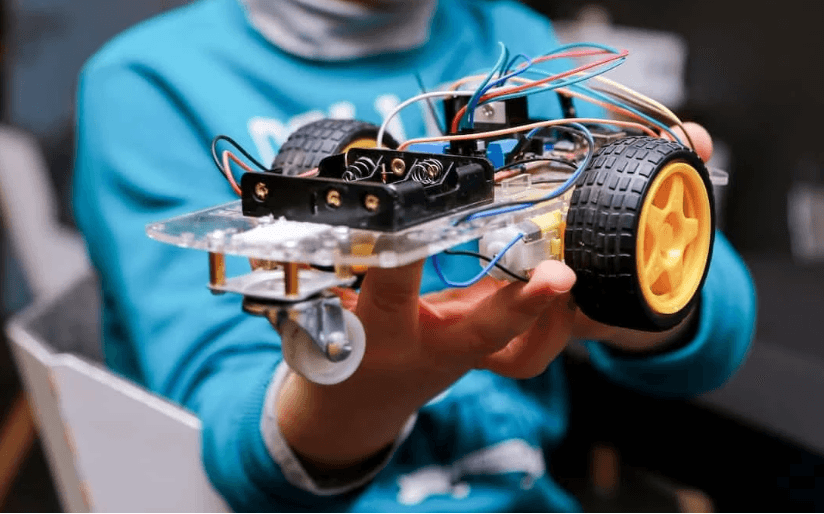

Frameworks such as OpenCV and scikit-image.Monocular and stereo cameras, radar sensors, sonar sensors, and LiDAR sensors.The perception system requires both hardware and software, including: Perception is basically the robot’s ability to “see.” Before a robot can make a decision and react accordingly, it has to accurately understand its immediate environment, including distinctly identifying people, signs, and objects, as well as capturing the progress of events in its immediate vicinity. Control – how can the robot best interact with the objects in the environment?.Planning and Prediction – how can the robot best navigate the environment?.Perception – what does the environment look like?.Most robotics processes are characterized by three steps: This blog post discusses how you can take advantage of Python’s open source assets to manipulate robots via sensors (cameras), actuators (motors), and embedded controllers (Raspberry Pi). One of the best ways to facilitate this transfer is using Python, which is widely used in the field of robotics for data collection, processing, and low-level hardware control using systems like ROS (Robotic Operating System) and frameworks like OpenCV and TensorFlow. The field of robotics is trying to recreate these cognitive abilities and corresponding actions, then transfer these skills to robots of all sizes, from mechanical patrol dogs to self-driving cars to pick-and-place robotic arms used in assembly lines. It’s a simple task, but the technological concepts involved in creating a vacuum cleaning robot are quite complex, including:įor humans, the ability to perceive and accurately interpret our immediate environment, and then act accordingly, is second nature. Some even have the ability to communicate with other electronic devices, such as smartphones. They’re a great illustration of the evolution of robotics with their ability to accurately map their environment and vacuum a room without any accidents. You’ve probably encountered a plate-sized, ten-centimeter thick vacuum cleaning robot going about its business, cleaning the floor without any human intervention – or collisions.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed